A Look At Google Cloud Platform’s New Balanced SSD

DoiT international architects and engineers work closely with major cloud vendors to regularly test, provide feedback, and educate others on new enhancements, especially for our growing customer base that continuously pushes the limits of these platforms.

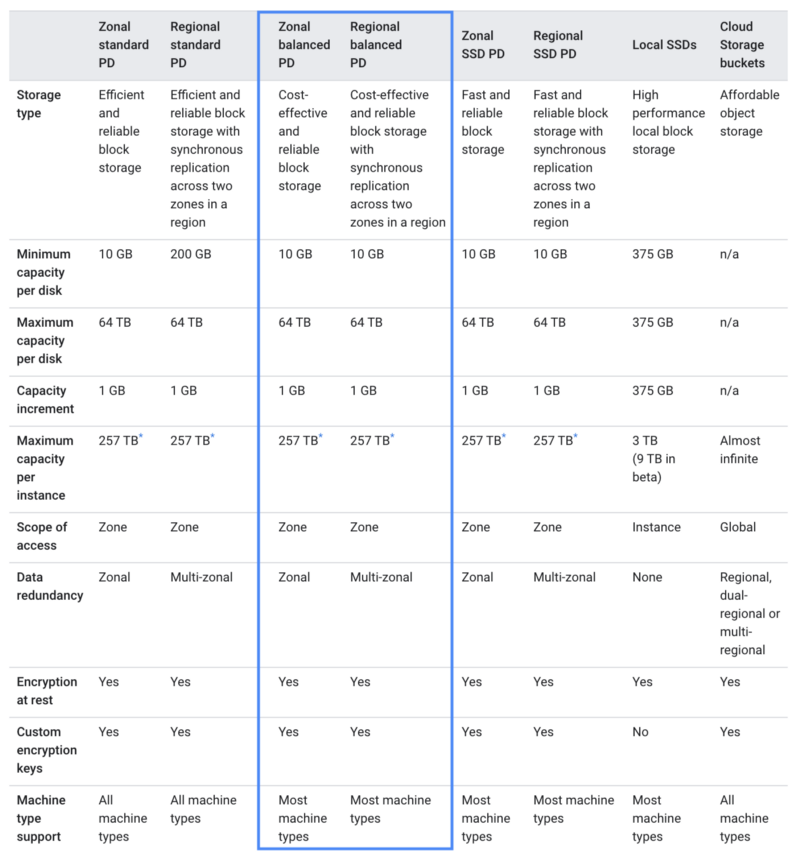

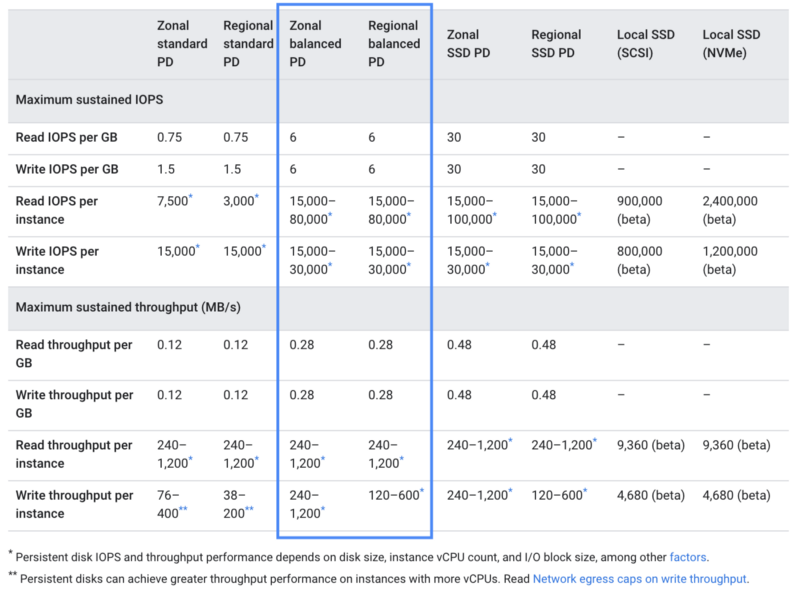

One enhancement we’ve eagerly anticipated is a new storage class on Google Cloud Platform called Balanced SSD. It offers SSD performance with an attractive price: $0.10/GB versus standard SSD at $0.17/GB at the time of this writing — nearly 50% savings.

Upon announcing the GA release to many DoiT International customers who heavily use SSD in their infrastructure, the response was overwhelmingly positive (examples below). As such, we felt compelled to share this exciting news and tips with the rest of the world.

“Is there a “more emails like this” button?”

“I’ll see if this is something we can use, thank you!”

“I love getting proactive notices like this. I wish I had time to keep abreast of every change GCP makes, but I don’t.”

Prerequisite To Enable Balanced SSD for GKE

Balanced SSD for managed Kubernetes workloads requires a CSI driver addon which is as simple as the following command.

gcloud beta container clusters update <YOUR-CLUSTER-NAME>

--update-addons=GcePersistentDiskCsiDriver=ENABLED

# add zone or region

Note: according to Google’s service terms, the CSI driver is currently not under SLA so we felt this was worth noting.

Warning: upgrading your cluster is a blocking activity and can be unavailable for 10 or more minutes.

If you do plan on upgrading your Kubernetes workloads, see example changes to StorageClass and Volume Claim Templates later in this article.

Creating Zonal or Regional Persistent Disks

Google’s documentation includes instructions on creating disks in the following links below, and you would use the pd-balanced type:

Migrating Existing Disks To Balanced SSD

One strategy may be to mount your new balanced SSD disk alongside your existing and copy data over, or below are steps to create a new image from the snapshot.

1. Snapshot. If changing a boot disk, first stop the instance. If a regular disk, you do not need to stop the instance. You can snapshot while running but there is a risk of data loss.

- https://cloud.google.com/compute/docs/disks/create-snapshots

- https://cloud.google.com/compute/docs/disks/snapshot-best-practices

# stop instance (optional if boot or concerned on data loss) gcloud compute instances stop <instance name>

# create snapshot gcloud compute disks snapshot <disk-name> --region <region>

2. Create. Create a new disk from your snapshot

# create new disk from snapshot gcloud compute disks create <disk-name>

--type pd-balanced

--source-snapshot <snapshot ID> --size <size in GB>

3. Detach and reattach. If changing a boot disk, edit the instance and change boot disk to the new disk. If changing a regular disk, you can detach existing and attach new.

- https://cloud.google.com/compute/docs/disks/detach-reattach-boot-disk

- https://cloud.google.com/sdk/gcloud/reference/compute/instances/detach-disk

- https://cloud.google.com/sdk/gcloud/reference/compute/instances/attach-disk

# unmount volume

sudo umount /dev/disk/by-id/google-DEVICE_NAME

# detach old disk

gcloud compute instances detach-disk <instance name> --disk <old disk> --zone <zone>

# attach new disk

gcloud compute instances attach-disk <instance name> --disk <new disk> --zone <zone>

# start instance (if stopped) gcloud compute instances start <instance name>

# SSH into instance and mount volume sudo lsblk # make note of new disk (sda1, sdb, etc.) sudo mkdir -p /path/to/mount sudo mount -o discard,defaults /dev/sdb /path/to/mount

4. Test. Start the instance and validate that the OS is booting as expected, In case of a problem you can stop the instance and revert to the old disk.

Note: please consider that the Disk Cloning option is not valid here, you can’t change the disk type using Disk Clone.

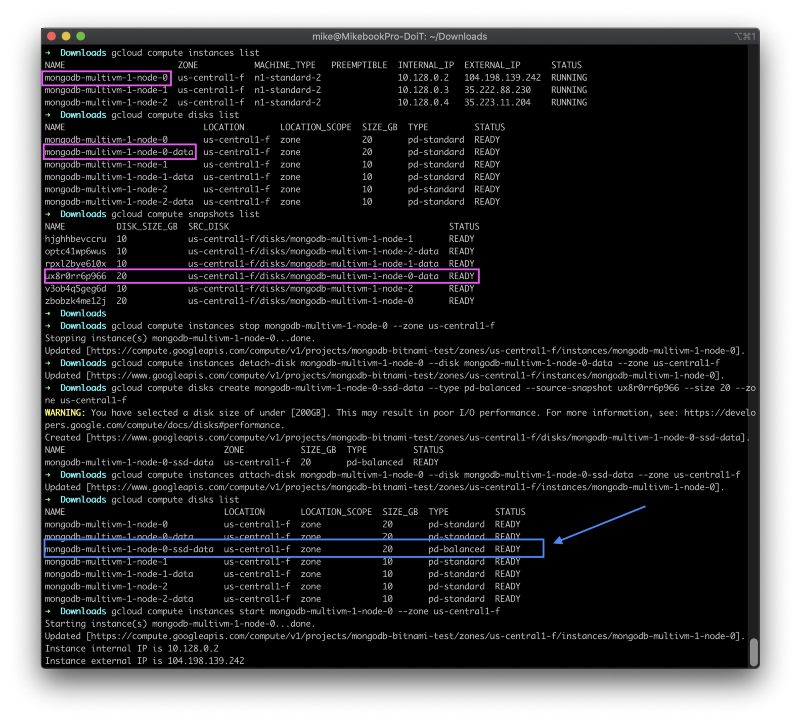

Working Example

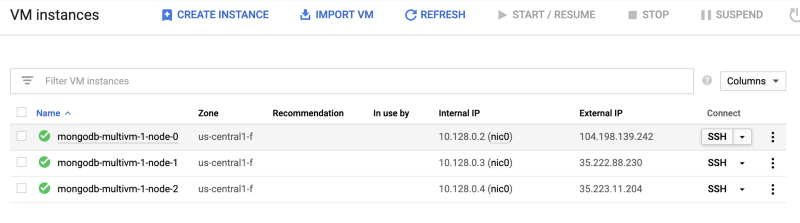

To test this out I used a sandbox project where I was experimenting with Bitnami’s Mongo DB multi-VM. I decided to replace a standard persistent disk with a balanced SSD and confirm my data still existed. See below.

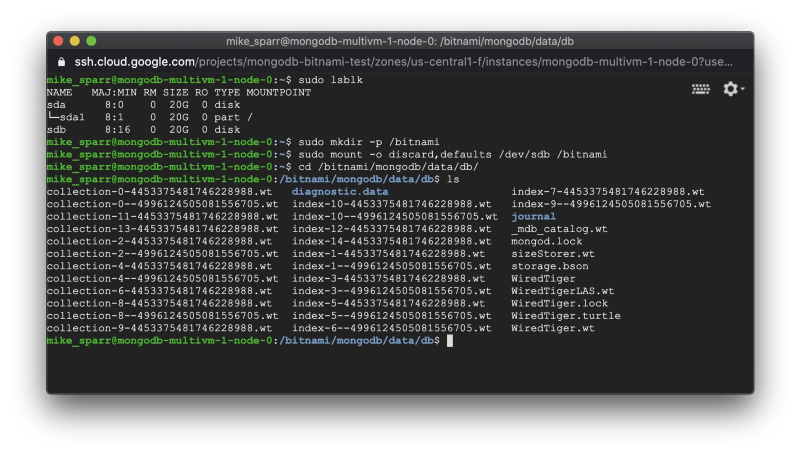

After attaching the new disk created as pd-balanced type, and restarting the instance, I then used the browser-based SSH terminal to connect to the instance.

I then listed the attached devices and proceeded to mount the new balanced SSD disk and test that all my files for MongoDB still existed.

Success! I hope this example is useful and helps you avoid jumping around to various articles to figure out how to take advantage of this new product.

GKE: Updating Storage Class And Volume Claims

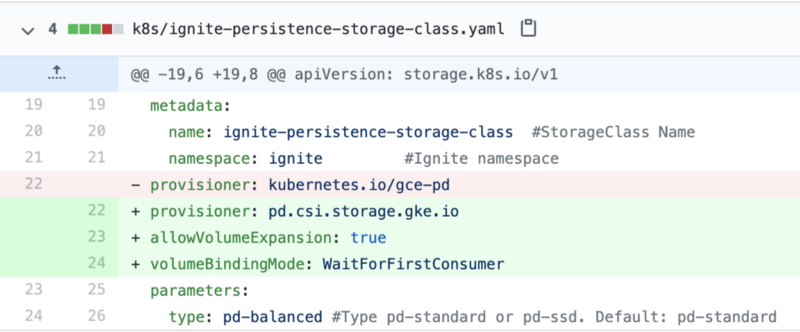

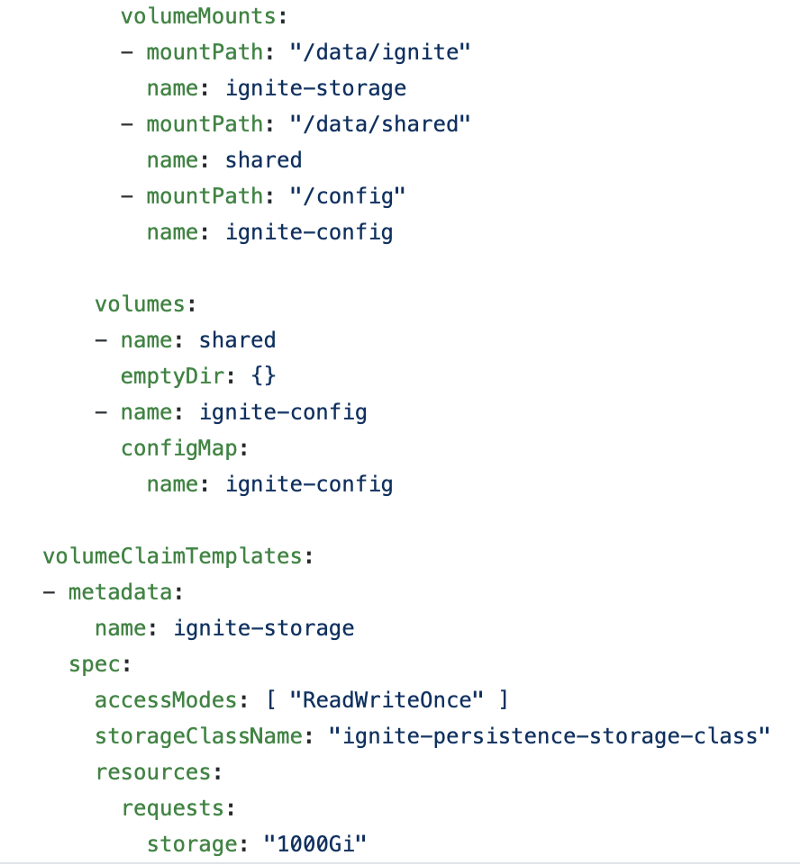

If you are running workloads on GKE and are comfortable with the CSI driver status, then after upgrading your cluster you simply need to add or edit a StorageClass and reference it from your PersistentVolumeClaims within your workloads.

One of my colleagues tested out upgrading a demo GKE project for Apache Ignite to use the new balanced SSD storage so see the changes they made below.

kind: StorageClass apiVersion: storage.k8s.io/v1 metadata: name: ignite-persistence-storage-class # <your-name-here> namespace: ignite provisioner:

pd.csi.storage.gke.io

allowVolumeExpansion: true volumeBindingMode: WaitForFirstConsumer parameters: type:

pd-balanced

#Default: pd-standard

There are only minor edits required for application workloads if you were not yet declaring a StorageClass.

Keeping You Informed

We continue to work with our cloud partners to provide in-the-field feedback based on customer use cases, and challenges, we witness every day. We are thrilled that Google has introduced solutions like the eagerly-anticipated balanced SSD.

Stay tuned for more exciting updates on various products we feel can assist our existing and future customers.

Finally, if you’d like to work on my team, we are hiring Cloud Architects — check the http://careers.doit.com for more details.